AI phone scams reset caller ID trust

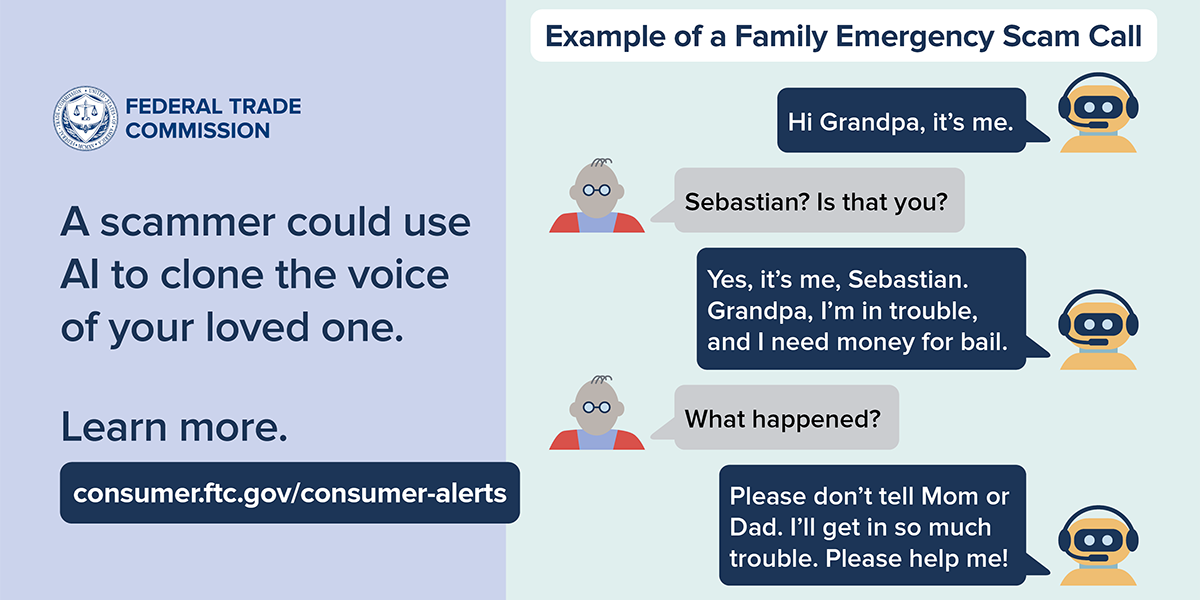

AI phone scams are no longer glitchy curiosities; they are weaponized by cheap generative models that can clone a bank rep in minutes. For consumers already drowning in spam calls, the threat is simple: your phone’s strongest trust signal, the human voice, just got compromised. Regulators and carriers promised STIR/SHAKEN would clean up caller ID, yet cloned voices ride on top of verified numbers, slipping through both compliance and instinct. The BBC report underscores how a single convincing call can move life savings before a human reviewer ever sees the transaction. The industry keeps celebrating frictionless onboarding, but criminals exploit the same automation to operate at startup speed. This is the moment to rethink voice trust from protocol to product design, because the playbook used for ransomware is now being applied to grandma’s savings account.

- Synthetic voice cloning now outruns caller ID and compliance controls.

- Financial institutions need layered verification that treats voice as a risky signal, not a trust anchor.

- Regulators are starting to define what counts as consent for AI-assisted calls, but enforcement is uneven.

- Consumers can slash exposure with call-back protocols and code words rather than gut feelings.

- Authenticated audio and device-side anomaly detection are poised to become the new spam filters.

AI phone scams are outpacing caller ID

How synthetic voices leap past STIR/SHAKEN

Caller ID authentication through STIR/SHAKEN was built to verify the number, not the person. Generative tools let attackers rent a clean VoIP line, pass STIR/SHAKEN attestation, then layer a cloned branch manager on top. Because the audio payload is out-of-band, carriers cannot fingerprint it without turning networks into surveillance systems. ASR spam filters look for boilerplate phrases, yet a synthetic rep can dynamically respond to objections, staying under statistical thresholds. The lesson: protocol compliance is necessary but not sufficient when deception lives in the content rather than the signaling.

Behavioral engineering meets machine learning

Criminal crews are pairing large language models with breach data to craft bespoke call scripts. A cloned voice references last month’s utility payment, pauses the way a real agent does, and confirms a postcode before pushing a transfer. Each micro-behavior gets scored like an onboarding funnel. Attackers run thousands of simulations overnight, testing which intonation triggers compliance. They are not guessing; they are A/B testing your anxieties. That is why frontline staff and families both need to treat every unexpected request as a potential penetration test.

Voice used to be the last mile of trust. AI has turned it into just another surface that needs authentication and logging.

Banks respond with AI phone scams countermeasures

Network-level filters vs privacy

Telecoms are experimenting with audio fingerprints and real-time diarization to spot cloned cadence, but scanning every syllable raises surveillance questions. Banks lobby carriers to flag anomalies on calls destined for high-risk numbers, yet regulators warn against blanket monitoring. Fraud teams are building real-time risk scores that blend device reputation, call duration, and account history. If a six-figure transfer request arrives minutes after a voice call from a new device, the transaction triggers a human review. This mirrors Zero Trust networking: assume compromise, verify every layer, log everything.

Device-side detection and edge AI

Because cloud analysis can be invasive, handset vendors are pushing detection to the edge. On-device models track acoustic artifacts like over-smoothing and latency jitter, acting as a local IDS for conversations. Combine that with contextual signals – is the call happening at 3am, is it the first time this contact has asked for payment credentials – and phones can surface a risk banner without sending audio to the cloud. Some banks are piloting in-app call back buttons that shut down the line and re-establish contact through a verified channel, effectively treating the microphone as another input that needs liveness checks.

Regulators race to redefine consent

Legal liability shifts to platforms

Regulators are starting to treat AI-assisted calls as a distinct category. The recent clampdown on synthetic political robocalls showed that consent must cover not just the number dialed but the use of synthesized voices. Liability is drifting toward platforms that host the models and the VoIP providers that carry the traffic. That creates a compliance stack where model providers must log training data provenance, call centers must document agent prompts, and carriers must flag abnormal call origination patterns. Expect attestation of AI usage to become as common as cookie notices.

Global patchwork creates exploitable gaps

Enforcement is wildly uneven. One jurisdiction treats AI voice cloning as identity theft, another sees it as a gray zone of creative expression. Attackers exploit that by hosting models in lenient regions and targeting victims across borders. Financial institutions operating globally need a policy engine that maps local rules to operational controls: where must calls be recorded, when are customers allowed to opt out, which prompts require legal review. Without that translation layer, compliance becomes a whack-a-mole exercise while criminals enjoy frictionless arbitrage.

Building resilience for brands and people

Operational controls for enterprises

Enterprises cannot wait for perfect regulation. They need runbooks that assume voice compromise by default. Train call center agents to escalate any customer claim that references an earlier call, track internal requests through ticket IDs instead of voices, and verify high-value actions with multi-modal checks like device push plus liveness. Cyber teams should monitor call recordings the way they monitor logs: sampling, anomaly detection, and red teaming with synthetic calls to stress-test controls.

- Adopt a call-back rule: outbound calls that request payments must be verifiable through an inbound number listed on the official site.

- Tag voice interactions with

transaction codesthat must match across channels before funds move. - Rotate security phrases for high-risk customers, stored in a hardware-backed password manager rather than shared scripts.

- Run quarterly tabletop exercises that include AI voice intrusion scenarios and measure time to containment.

Consumer-level habits that actually work

Consumers can borrow enterprise discipline. Set a family rule: no money moves over voice without a pre-agreed code word sent via text. If a caller claims urgency, hang up and dial back using the number on your card or the official app. Disable automatic call forwarding and audit voicemail access because attackers use those to hijack recovery flows. Keep software updated so on-device detectors improve, and treat every unexpected call as a phishing email with audio. These habits are boring, which is why they work: they replace gut checks with repeatable procedures.

What comes next for voice trust

Rise of authenticated voice

We are likely to see authenticated caller sessions where both sides exchange tokens before audio even begins. Think of it as mTLS for voice: the caller presents a signed credential, the recipient verifies it through the bank app, and only then does the conversation start. Brands that embed this into their apps will turn voice from a liability back into a service channel. Expect operating systems to expose call intent APIs so apps can declare why they are calling and provide cryptographic proof.

The economic arms race

Attackers will not stand still. As detection models flag common artifacts, generative tools will add noise and micro-pauses to mimic human imperfections. Costs will still trend downward, meaning small crews can run campaigns that used to require state-level budgets. The winning defenders will treat AI phone scams as an economic problem: raise attacker costs with friction like verified call flows, shrink blast radius with transaction limits, and speed detection with shared threat intelligence. The future of voice is not doom; it is a redesign that treats conversation as data requiring integrity, authenticity, and audit trails.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.