AI Safety Governance Turns Into Shipping Rules

AI Safety Governance Turns Into Shipping Rules

AI safety governance is moving from summit pledges to shipping deadlines. Regulators are drafting rules that treat frontier AI less like research and more like critical infrastructure. For model labs, cloud providers, and startups, the pain point is simple: compliance obligations are arriving faster than product teams can retool data pipelines, eval loops, and incident response. Investors see the same trend as a new moat, not a nuisance. Ignore the shift and you risk losing market access; embrace it and you can design defensible products.

Officials are signalling that voluntary guardrails will be replaced by mandatory reporting, safety tests, and export controls. That signal lands while enterprises are scaling generative workloads, meaning the governance conversation is happening in real time with deployments. The stakes are as high as the compute bills: shipping without a safety story invites fines, bans, or public backlash, especially when AI-driven failures can cascade through supply chains.

- Mandatory disclosures for high-capability models are becoming table stakes rather than optional PDFs.

- Teams that operationalise evaluations now will outrun slower rivals once audits become compulsory.

- Supply chain transparency is shifting from nice-to-have to a procurement prerequisite.

- Cross-border divergence will reward architectures that can be configured for multiple regimes.

AI Safety Governance Starts With Infrastructure

National strategies increasingly anchor around compute thresholds: if your cluster can train a model that surpasses frontier benchmarks, expect obligations to register, log, and report. That pushes AI safety governance down into the infrastructure layer. Operators are being asked to document how GPU clusters are allocated, how API access is throttled, and how they detect anomalous workloads that might indicate misuse or model exfiltration. The direction of travel is obvious: safety reviews will ride alongside performance benchmarks every time a new model is spun up.

Data pipelines under the microscope

Governance is forcing teams to treat data curation as a regulated workflow. Expect requirements to document provenance, remove prohibited content, and prove that red-teaming datasets are refreshed. Tools like data lineage graphs and content filters that once lived in compliance slide decks now need to be wired directly into ETL jobs. Pro tip: instrument observability hooks that log sampling, deduping, and policy enforcement in real time, because regulators will not accept screenshots when something goes wrong.

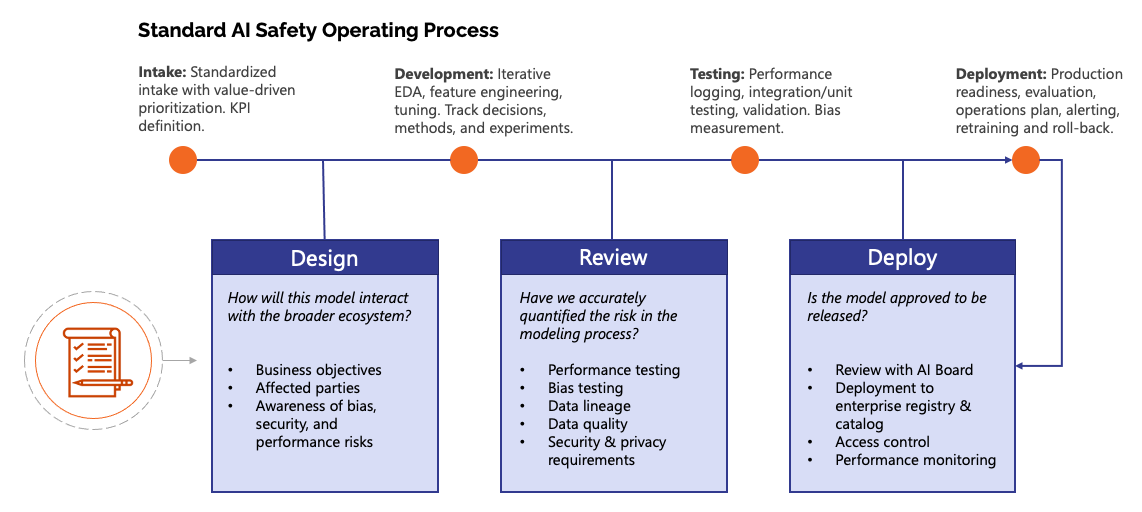

Model accountability becomes productized

Model cards and system cards are evolving from marketing collateral into living compliance artifacts. Every major release now needs traceable eval harness results, documented guardrails, and incident playbooks. The fast follower strategy of shipping first and retrofitting later is dying. Instead, teams are embedding safety criteria in their CI/CD gates, blocking promotion to production unless red-team regression tests pass. That is how governance becomes culture: the pipeline refuses to ship unsafe artifacts by design.

How AI Safety Governance Reshapes Product Teams

Governance is no longer an annual policy review; it is a daily design constraint. Product owners must translate abstract risk categories into clear user stories. Security leaders need to anticipate how a new multimodal feature changes the attack surface. Legal teams need visibility into telemetry so they can prove due diligence. The most effective organizations are assembling cross-functional tiger teams that pair ML engineers with policy specialists, because the questions are increasingly technical: what counts as sufficient RLHF coverage, and how do you measure jailbreak resistance over time.

Security-first design beats patchwork

Traditional appsec checklists do not map cleanly onto generative systems. Threat models must include prompt injection, dataset poisoning, and model inversion. That is why the next generation of security leaders are shipping with built-in mitigations: content filtering at the API edge, rate limiting tuned for LLM abuse, and memory sandboxing for agents that operate over sensitive docs. The best teams treat red-teaming as a continuous service, not a quarterly stunt, and they publish postmortems internally so patterns can be fixed at the library level.

Pricing, liability, and the new contract math

As governance hardens, procurement teams are adding clauses that shift liability back to vendors. Expect to see SLAs tied to safety metrics, not just latency. A provider might promise that its content moderation stack catches 99 percent of disallowed outputs and offer credits if it does not. That reshapes pricing models: risk-adjusted premiums will reward vendors who can prove strong controls. The upside for builders is differentiation; if you can show verifiable audit logs and certified SBOMs, you can command enterprise pricing while rivals haggle.

Geopolitics and Market Reality

AI safety governance is splitting into regional flavors. The United States is exploring executive tools tied to compute exports and critical infrastructure designations. The European Union is rolling out horizontal rules that classify AI systems by risk and enforce pre-market conformity checks. East Asian markets are layering content and security mandates onto domestic platforms. Multinationals cannot afford to pick one lane; they need architectures that can be configured per region without fragmenting codebases.

Regulatory divergence as design constraint

To cope, platform teams are building policy engines that load region-specific rules at runtime. One configuration might disable training data that touches sensitive categories, while another enforces stricter logging for regulated industries. This modularity turns governance into a competitive advantage because it reduces time to compliance when laws shift. As one policy lead put it in a private briefing:

Compliance used to be paperwork. Now it is a feature flag backed by telemetry.

Chip supply chains become policy levers

Export controls on advanced accelerators are now core to governance. If compute is capped, model design must adapt: more efficient architectures, aggressive parameter sharing, and smarter distillation. Cloud providers are also being pushed to attest to who uses their clusters and for what. Expect to see identity proofing at the API layer and tamper-evident logs that map workload IDs to customers. The cloud wars are quietly becoming compliance wars.

Builder Playbook for AI Safety Governance

Immediate steps

- Inventory your models and map them to risk classes; publish

model cardsand refresh them with every sprint. - Wire automated evaluations into

CI/CD; fail builds if critical safety tests regress. - Generate an

SBOMfor your AI stack so you can answer supply chain questions on demand. - Stand up

incident playbooksthat define escalation for prompt attacks, data leaks, and output hallucinations.

Pro tips that reduce drag

Use privacy-preserving data pipelines by default so you are prepared for stricter data minimization rules. Run continuous red-team drills with third parties to avoid blind spots. Label training data with region flags so you can exclude segments when exporting models. Document human oversight in deployment workflows; regulators want to see who can override a system and how often they do. Finally, simulate audits: rehearse producing audit logs, eval reports, and change histories within tight deadlines. That muscle memory shortens real investigations.

Future Outlook

Governance momentum will not slow. Next on the docket: real-time monitoring mandates for frontier systems, licensing for high-risk deployments, and penalties tied to the severity of incidents rather than headline fines. Startups that bake compliance into their technical stack will find that safety can be a feature: enterprise buyers increasingly ask for proof of resilience alongside benchmarks. For incumbents, the challenge is cultural. They must reward teams for shipping safer, not just faster. The winning pattern is clear: treat AI safety governance as a design material, integrate it into code and contracts, and turn regulation into a moat rather than a tax.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.