Colorado Lawmakers Push AI Rules and Tax Credits

Colorado Lawmakers Push AI Rules and Tax Credits

State legislatures are no longer tinkering around the edges of tech policy. They are becoming the front line. In Colorado, that shift is happening in plain view: lawmakers are trying to regulate artificial intelligence, reshape economic incentives through tax credits, and keep high-voltage social policy fights alive at the same time. That combination matters because it shows what modern governing looks like when software, healthcare, business incentives, and ideology all collide in one session. For companies, workers, and voters, the message is blunt: the rules that shape how AI is built, sold, and used may increasingly come from state capitols before Washington can move. Colorado is turning into a case study in how quickly local politics can set the tone for national debates.

- Colorado lawmakers are treating AI regulation as an immediate policy issue, not a future one.

- The same legislative session is also tackling tax credits and abortion pill policy, showing how crowded and consequential state agendas have become.

- Businesses should read this as a compliance warning: state-by-state rules are becoming the new normal.

- Colorado’s moves could influence how other states write AI laws and economic policy.

Why Colorado AI rules matter beyond Colorado

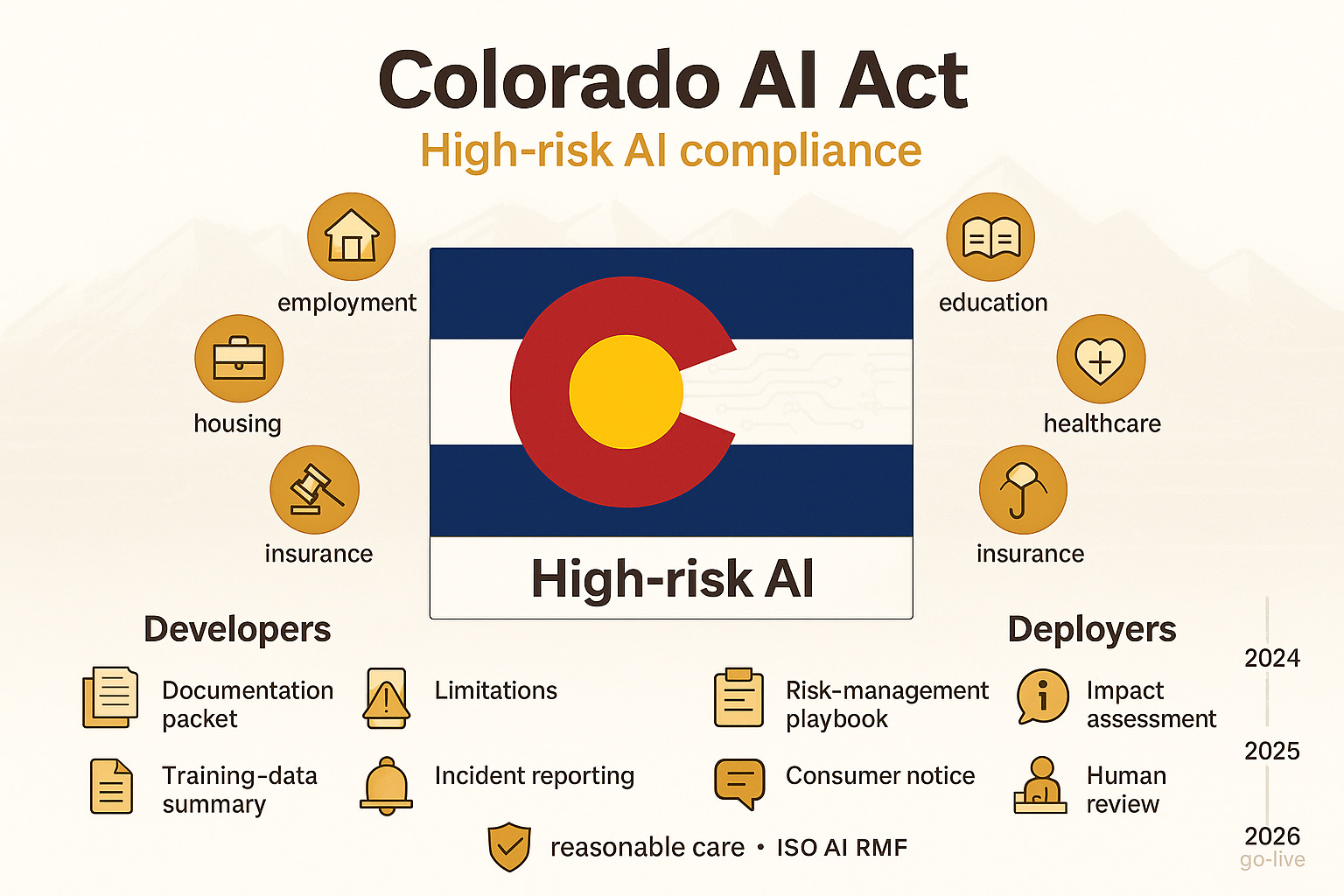

The headline issue here is simple: Colorado AI rules are part of a much bigger race to define accountability for automated systems. That sounds abstract until you look at what lawmakers are really worried about: biased decision-making, opaque algorithms, consumer harm, and the growing use of machine-generated outputs in sensitive areas like hiring, lending, education, insurance, and public services.

States have noticed that waiting for a comprehensive federal framework may take years. Meanwhile, companies are already shipping products powered by machine learning, large language models, and automated decision systems. So Colorado, like a handful of other states, appears to be moving with urgency.

That urgency is not just about catching up with technology. It is also about power. If states write the first durable rules, they can shape the compliance templates companies use nationwide. Businesses rarely build fifty different systems for fifty different states if they can avoid it. A strong state law can become a de facto national standard.

Key insight: The real story is not just whether Colorado passes an AI measure. It is whether state-level AI governance becomes the operating baseline for the rest of the country.

The political stack is getting crowded fast

One reason this legislative moment stands out is that AI regulation is not arriving in isolation. It is sharing the agenda with tax credits and abortion pill policy – two issues that pull in very different coalitions and expose the breadth of what state lawmakers now manage.

That matters for two reasons. First, it raises the stakes for negotiation. Bills do not move through a vacuum. They compete for time, attention, and political capital. Second, it reveals how policy bundling works in practice. A legislature can be debating tech accountability in one committee room and reproductive healthcare access or fiscal incentives in another, all under the same compressed calendar.

The result is messy, but revealing. State government is becoming the place where digital policy and everyday life intersect most directly.

AI policy is no longer niche

Five years ago, AI oversight might have sounded like a specialty topic for academics, civil liberties groups, or a few tech lawyers. Not anymore. Once tools powered by generative AI and automated scoring systems became visible to the public, the politics changed. Lawmakers now understand that software can affect whether someone gets a job interview, qualifies for a financial product, or receives reliable information.

That is why these debates are expanding beyond privacy and into governance: who is responsible when an AI system causes harm, what disclosures should be required, and how companies prove they tested for risk.

Tax credits still shape the economic backdrop

Tax credits may sound less dramatic than AI or abortion policy, but they are central to how states compete. Credits can steer investment, support targeted industries, subsidize families, or soften the blow of economic disruption. In a session that also includes AI policy, they take on an added layer of meaning.

If states want innovation, they often reach for incentives. But if they fear harm, they reach for guardrails. Colorado appears to be trying to do both at once: encourage growth while establishing rules. That balancing act is increasingly the default approach in tech policy.

Abortion pill debates keep healthcare policy in the frame

The abortion pill issue underscores a broader point: modern state sessions are not compartmentalized. Tech does not sit in one tidy lane, business in another, and healthcare in a third. Public trust, access, safety, and ideology cross all of them.

For readers in the tech sector, this is a useful reminder that regulation rarely arrives as a clean, standalone package. It emerges from larger political ecosystems where lawmakers are managing moral conflict, budget pressure, and constituent demands all at once.

What businesses should watch in Colorado AI rules

If your company develops, deploys, sells, or relies on automated systems, Colorado AI rules should be read as an early operational signal. Even without the final statutory language in front of every executive, the likely pressure points are familiar.

1. Risk assessments become table stakes

Expect more pressure to document how systems are trained, evaluated, and monitored. That means internal reviews of data sources, testing methods, known limitations, and downstream impacts.

Pro tip: If your compliance process still treats AI like ordinary software, that is already outdated. Teams should distinguish between conventional software features and systems that make or influence consequential decisions.

2. Disclosure requirements could widen

One recurring theme in AI legislation is transparency. Users, consumers, or regulated entities may need to know when they are interacting with AI or when AI materially shapes an outcome. This does not solve every problem, but it creates a paper trail and a public expectation.

For companies, the challenge is practical: where do you place that disclosure, how specific does it need to be, and what evidence backs it up?

3. Bias and discrimination testing may move from optional to expected

Much of the anxiety around AI regulation centers on discriminatory outcomes. Lawmakers are especially focused on contexts where historical bias can be reproduced at scale. Hiring tools, eligibility systems, and predictive scoring products are obvious flashpoints.

If Colorado advances a stricter standard, organizations may need stronger auditing workflows and clearer accountability between product, legal, and policy teams.

4. Vendors will not be able to hide behind black boxes

A common enterprise problem is that buyers rely on third-party AI products they do not fully understand. That model is getting harder to defend. If states impose duties on deployers as well as developers, companies may need contractual guarantees, audit rights, or more detailed technical documentation from vendors.

In plain English: buying AI from someone else will not automatically shift the risk away from you.

The strategic tension at the center of this session

Colorado’s legislative agenda reflects a deeper policy tension. Lawmakers want innovation, competitiveness, and economic dynamism. They also want safety, fairness, and public accountability. Those goals can coexist, but not without friction.

That friction shows up in almost every modern policy debate:

- How much regulation is enough to prevent harm without chilling investment?

- How fast can lawmakers act before the technology changes again?

- Who bears the compliance cost: startups, large incumbents, or consumers?

- Can a state law be tough enough to matter but flexible enough to survive legal and practical challenges?

These are not abstract questions. They determine whether a state becomes known as a regulatory leader, a business-friendly haven, or both.

The uncomfortable truth: Tech companies often ask for regulatory clarity, but when states start delivering it, clarity can feel a lot like constraint.

Why this matters for the national map

Colorado is not operating in a vacuum. State lawmakers across the country are watching one another closely. When one state tests a serious framework, others borrow language, adapt definitions, and compare political outcomes. That is how policy spreads.

For national companies, this creates a familiar but difficult pattern: the compliance map fragments before it consolidates. Teams that operate across multiple states may soon face a patchwork of rules around AI governance, documentation, consumer notice, and sector-specific restrictions.

There are two likely outcomes from here. The first is convergence, where a few leading states define the market standard and most companies align upward. The second is conflict, where different states take incompatible approaches and businesses are forced into expensive jurisdiction-by-jurisdiction adjustments.

Neither scenario is especially comfortable for industry, but both are plausible.

What smart organizations should do next

Even if Colorado’s final policy details evolve, the broader direction is clear. Companies should not wait for a surprise enforcement moment to get serious.

Build a basic AI governance layer now

Every organization using meaningful automated systems should know:

- Where AI is being used

- Which systems affect high-stakes decisions

- Who owns oversight internally

- What documentation exists for testing and monitoring

- Which vendors present the biggest transparency risk

At minimum, teams should maintain an internal inventory. Something as simple as a structured registry can help:

system_name | vendor | use_case | decision_impact | training_data_notes | risk_owner | review_date

This is not glamorous work, but it is the foundation of defensible compliance.

Stress-test vendor relationships

If a third party powers critical workflows, ask harder questions. Can the vendor explain model behavior at a useful level? Do they provide evidence of bias testing? Can they support audit obligations if a state regulator asks?

Too many procurement teams still treat AI products like ordinary SaaS. That is becoming a liability.

Track state policy like product risk

Legislative monitoring should not live only with government affairs. Product teams, legal counsel, trust and safety leads, and executive leadership all need visibility. State law is increasingly a product constraint, not just a lobbying topic.

The bigger editorial takeaway

What is happening in Colorado should end the lazy assumption that meaningful tech regulation only happens in Washington or Brussels. State capitols are moving faster, often with more direct consequences for ordinary users and local businesses.

That does not mean every bill will be elegant. Some proposals will be overbroad. Some will be underpowered. Some will create real compliance drag. But dismissing them would be a mistake. The legislative center of gravity is shifting, and Colorado is showing what that looks like in real time.

The mix of artificial intelligence rules, tax credits, and abortion pill policy may feel like an odd bundle. It is actually a preview. The next era of governance will be less neatly segmented, more operationally demanding, and far more local than the tech industry once expected.

Bottom line: Colorado is not just debating policy. It is testing how a state can govern a future where algorithms, incentives, and social conflict all compete for legal definition at once. That should get the attention of every executive, founder, policy analyst, and voter watching where power is moving next.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.