Microsoft Rethinks Recall After Privacy Outcry

Microsoft thought Recall would be the hero feature that made Copilot+ laptops irresistible. Instead, the company is wading through a Microsoft Recall privacy storm that has security researchers, regulators, and even loyal Windows fans demanding a timeout. The promise of instant, AI-powered memory across every app is intoxicating, but the idea of a local database quietly logging screenshots of your life triggered a visceral “nope” from anyone who has ever typed a password, opened a medical portal, or drafted a confidential brief. This is the clash point between AI ambition and the limits of user trust, and it is unfolding in real time.

- Recall ships paused as Microsoft rewrites its security story and flips the feature to opt-in.

- Security pros warn a local screenshot index is a jackpot for malware without airtight controls.

- Copilot+ momentum stalls while regulators sharpen their gaze on AI telemetry and consent.

- Enterprise buyers want clear admin levers before deploying AI memory at scale.

How the Microsoft Recall Privacy Backlash Ignited

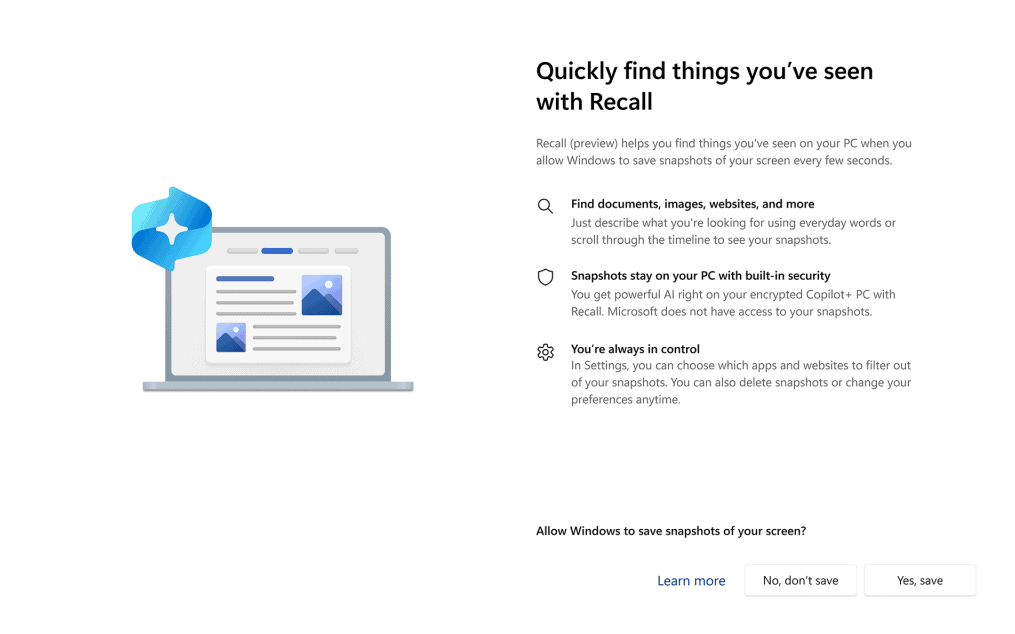

Recall was pitched as a personal time machine: a local vector index that lets Copilot search everything you have seen on screen. The first demos looked magical. Then researchers inspected the early builds and found that the SQLite database storing snapshots was easy to grab, unencrypted at rest for standard users, and potentially exposed to any malware that gained a foothold. Privacy advocates immediately framed it as a self-built spyware engine. Within days, Microsoft shifted from hype to damage control.

Speed vs. Safety Debt

Microsoft has been racing to keep pace with OpenAI and Google in the AI feature race. That speed created safety debt. The initial plan required only a Windows Hello login to access Recall. There was no default BitLocker enforcement, and the database sat in a user folder rather than inside a hardened enclave. Critics argued that Redmond prioritized developer velocity over threat modeling. The company is now rewriting that story with encryption by default, TPM 2.0 binding, and mandatory user opt-in.

Why This Matters Beyond Windows

The Recall blowback is a cautionary tale for every platform sprinting to bolt AI into core UX. If Microsoft cannot convince users that on-device AI memory is safe, smaller vendors will face even fiercer skepticism. Regulators are already circling. Expect data protection authorities to treat AI-derived context as sensitive data, demanding explicit consent and verifiable deletion paths. The failure mode is not just bad press – it could be fines, blocked rollouts, and slowed adoption of on-device AI across the industry.

Deep Dive: Rebuilding Trust in Microsoft Recall Privacy

To salvage Recall, Microsoft must show its homework. That means hardening the stack, exposing admin controls, and proving that productivity benefits outweigh the risks.

Locking Down the Data Path

Microsoft now promises that Recall data will be encrypted at rest, gated by Windows Hello, and stored within a protected directory that resists standard process access. The company also plans to block screenshots of sensitive surfaces such as DRM video, password fields, and protected PDFs. That is table stakes. The real test is whether the kernel-level hooks can prevent malicious processes from scraping the dataset once a user session is compromised. Endpoint detection partners will need new signatures to flag Recall scraping behavior.

Opt-In Is Not a Panacea

Turning Recall into an opt-in feature is the right political move, but it does not erase the risk calculus. Users need transparent onboarding that spells out what is captured, how long it is kept, and how to purge it instantly. An obvious toggle in Settings is essential, but so are per-app exclusions and scheduled deletion policies. Enterprise admins will demand Intune policies that let them disable or severely restrict Recall on managed fleets, plus reporting hooks to prove compliance.

Performance and Storage Headroom

Recall rides on NPUs and local storage. Early tests showed the snapshot index could grow into tens of gigabytes over weeks. On thin-and-light laptops with 512GB drives, that is a real tax. Microsoft must expose granular storage caps and purge schedules. Otherwise, users will associate Recall with fan spin-ups and storage warnings instead of seamless retrieval.

Market Impact: Copilot+ Momentum Stalls

Recall was the headliner for Copilot+ PCs, making Qualcomm Snapdragon X Elite laptops feel futuristic. The pause puts OEMs in a bind. Launch hardware still ships, but the flagship capability is sidelined until a future update. That dulls the upgrade pitch for consumers and gives rivals like Apple breathing room to position their own on-device AI stories without the same privacy baggage. It also invites questions about how tightly Microsoft vetted partners and whether the Copilot+ badge will become synonymous with beta features.

Enterprise Adoption on Hold

Corporate buyers already wary of shadow data flows will slow-roll any feature that could expose IP. Without a proven security model, Recall will stay off in most managed environments. That could undermine Microsoft’s goal of making Copilot the default digital assistant across productivity stacks. If recall-like features remain consumer-only, the ecosystem becomes fragmented, and developers will hesitate to build experiences that rely on that index.

Developer Ecosystem Signals

Recall was supposed to be a platform primitive: third-party apps could query the context index to deliver smarter results. Pausing the rollout delays that API maturation. Developers may pivot to building their own on-device context caches, which could fracture the Windows AI story. Microsoft needs to publish a clear roadmap, including SDK security guarantees and sandbox requirements, to keep developers engaged.

What Comes Next for Microsoft Recall Privacy

Expect a staged comeback. Microsoft will likely ship Recall to Windows Insiders first with enhanced telemetry, then roll to retail once security partners sign off. Watch for third-party audits and potentially a public bug bounty focused on Recall data paths. The company may also integrate Pluton hardware security or VBS-style isolation to further harden the feature.

Pro Tips for Users

- When Recall returns, set a tight retention window and storage cap on day one.

- Create

Group Policytemplates that disable Recall on work profiles while allowing it on personal sessions. - Use

BitLockerand verified boot to reduce the blast radius of physical theft. - Exclude finance, legal, and password manager apps from Recall capturing.

Why This Matters

The Recall saga is not just a Microsoft misstep – it is a preview of the AI UX wars ahead. Every platform wants continuous, contextual memory to power assistants. But consumers will only embrace it when privacy controls are visible, defaults are conservative, and security designs are peer-reviewed. Microsoft has the scale to reset expectations. If it succeeds, on-device AI memory could redefine how we navigate digital life. If it fails, the backlash will become a case study in how not to ship AI.

“Users will accept AI memory only when it feels like their vault, not the operating system’s surveillance layer.”

For now, the smartest move is to treat Recall as a beta idea rather than a shipping promise. Microsoft must earn the right to remember everything by showing it can forget responsibly.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.