Snowflake data breach exposes brittle cloud supply chain

Snowflake data breach exposes brittle cloud supply chain

The Snowflake data breach landed like a gut punch for every enterprise that believed outsourcing storage meant outsourcing risk. Attackers looted customer datasets, from banks to ticketing giants, by abusing weak vendor controls and stale credentials. This is not just another incident report – it is a referendum on how the cloud era handles identity, telemetry, and shared responsibility. If your business still treats third party access as a convenience instead of a critical attack surface, the lesson is simple: you are already late.

- Attacks exploited reused or unrotated

credentialsand broad vendor access to sensitive tables. - Slow anomaly detection showed how little live telemetry many customers enforce on

SaaSdata layers. - Regulators will likely force clearer lines of accountability across cloud supply chains.

- Enterprises must harden identity, observability, and backup posture around third party platforms.

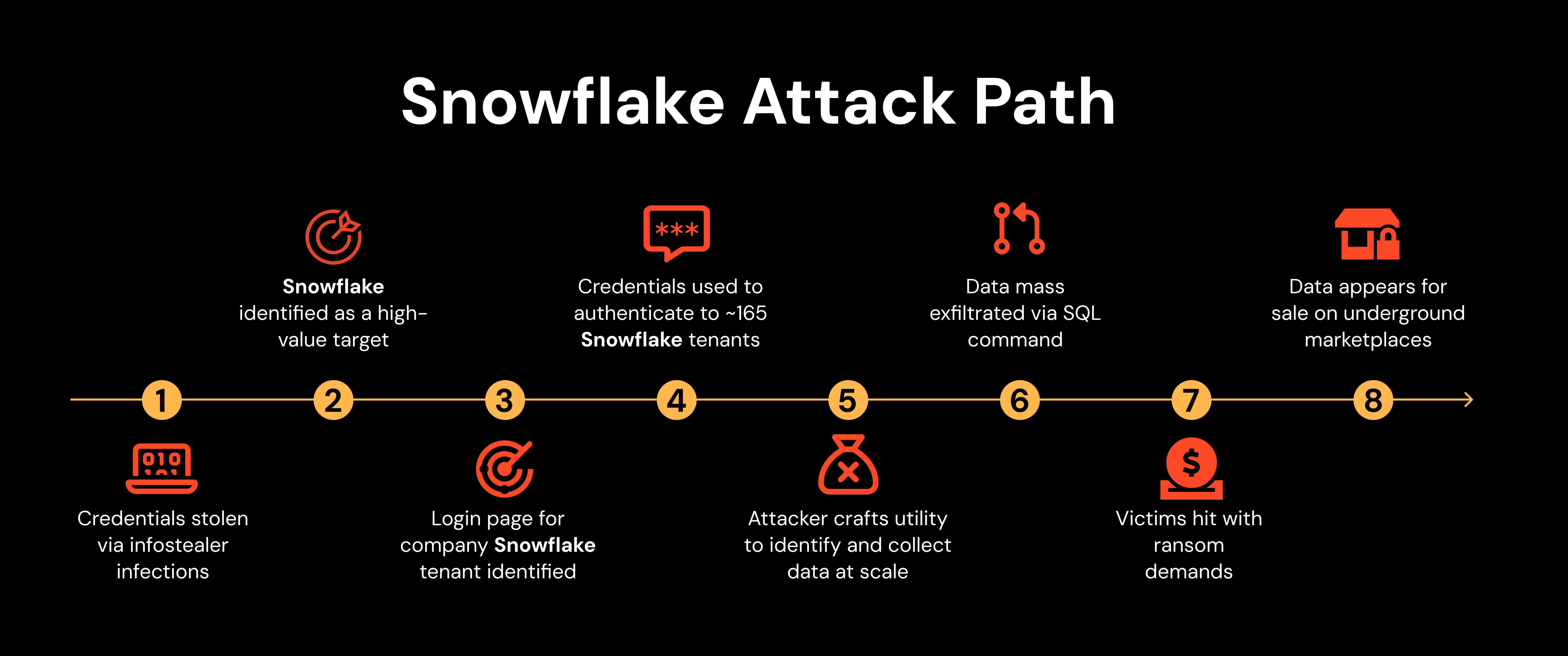

How the breach unfolded

Initial forensics point to compromised Snowflake service accounts, some tied to contractors, being used to exfiltrate bulk records. Instead of smashing through perimeter defenses, attackers simply walked through doors left open by permissive role scopes and forgotten keys. Once inside, standard SQL queries were enough to stage and siphon sensitive exports.

Credential reuse and vendor sprawl

Too many organizations still allow long-lived API keys and weak multi factor enforcement for partner logins. In this case, attackers reportedly leveraged credentials associated with a support vendor and pivoted across multiple customer environments. That is the nightmare scenario: one compromised contractor unlocking dozens of high-value datasets.

Expert take: Treat every partner identity like a production admin account. If it can touch sensitive data, it deserves short-lived tokens, device checks, and tight scoping.

Why detection lagged

Bulk extraction of terabytes should have set off alarms, yet the breach simmered for days. Many customers rely on platform defaults that log access but do not alert in real time. Without baseline models of normal query patterns, unusual SELECT storms fly under the radar. The lesson: streaming telemetry into a SIEM or SOAR is not optional for third party data stores.

Snowflake data breach fallout

Enterprises now face regulatory scrutiny and reputational damage. Regulators will ask why background checks on vendors did not extend to continuous validation of their access. Cyber insurers will question whether shared responsibility was translated into enforceable controls.

Key insight: Shared responsibility is not a get out of jail free card. If your vendor sits between attackers and customer data, you own the blast radius.

Customer trust on the line

Consumers do not care which subcontractor lost the keys; they blame the brand on their bank statement or ticket stub. A cloud provider breach erodes trust faster because data aggregation multiplies risk. Companies must communicate clearly about containment steps, credit monitoring, and how they are hardening access.

Operational debt becomes breach fuel

Lingering technical debt – unused service accounts, open network rules, absent least privilege – becomes combustible in a supply chain incident. The breach shows how ignoring hygiene in third party environments accelerates attacker dwell time and damage.

Snowflake data breach: what to fix now

The quickest wins start with identity. Rotate every partner credential, enforce MFA with hardware keys, and move to short-lived, scoped OAuth tokens. Layer on conditional access that binds logins to managed devices and approved networks.

- Lock down roles: Audit

ROLEscopes and strip default privileges from service accounts. - Instrument telemetry: Stream

query_historyandlogin_historyinto your detection stack; build alerts for abnormal export sizes. - Backups with air gaps: Store immutable copies outside the affected platform to guarantee recovery.

- Table level encryption: Encrypt columns holding

PIIso stolen dumps are harder to monetize.

Incident response must include tabletop exercises focused on vendor compromise. Simulate how you would revoke access, rotate secrets, and communicate with regulators within hours, not days.

Pro tips for resilience

Label every external integration with its data sensitivity and required controls. Implement JIT (just in time) access for admins so high-risk permissions auto-expire. And insist on contractual clauses that mandate hardware MFA for vendor staff.

Pro tip: If a partner cannot produce tamper-evident logs and proof of hardware-backed authentication, they should not touch production data.

Why this breach matters

This is a wake-up call about cloud monoculture. When many enterprises centralize data on a single platform, that platform becomes a systemic risk. A failure in identity hygiene cascades across sectors, from finance to entertainment.

It also underscores that compliance checklists are not security strategies. Many affected organizations were likely compliant on paper yet missed obvious gaps like unmonitored exports. Regulators may respond with stricter guidance on third party telemetry, breach reporting timelines, and proof of least privilege enforcement.

The future of SaaS risk

Expect a rise in confidential computing and customer managed keys to reduce reliance on provider-controlled access. More enterprises will push for BYOK (bring your own key) and HSM-backed controls so stolen credentials alone cannot unlock data. Providers will need to ship better native anomaly detection to avoid becoming soft targets.

Ultimately, the Snowflake data breach shows that cloud convenience does not absolve security diligence. The next generation of contracts, architectures, and monitoring strategies must assume vendors can be compromised and design blast radii accordingly.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.