Unmasking the AI Apocalypse Meme Spiral

Unmasking the AI Apocalypse Meme Spiral

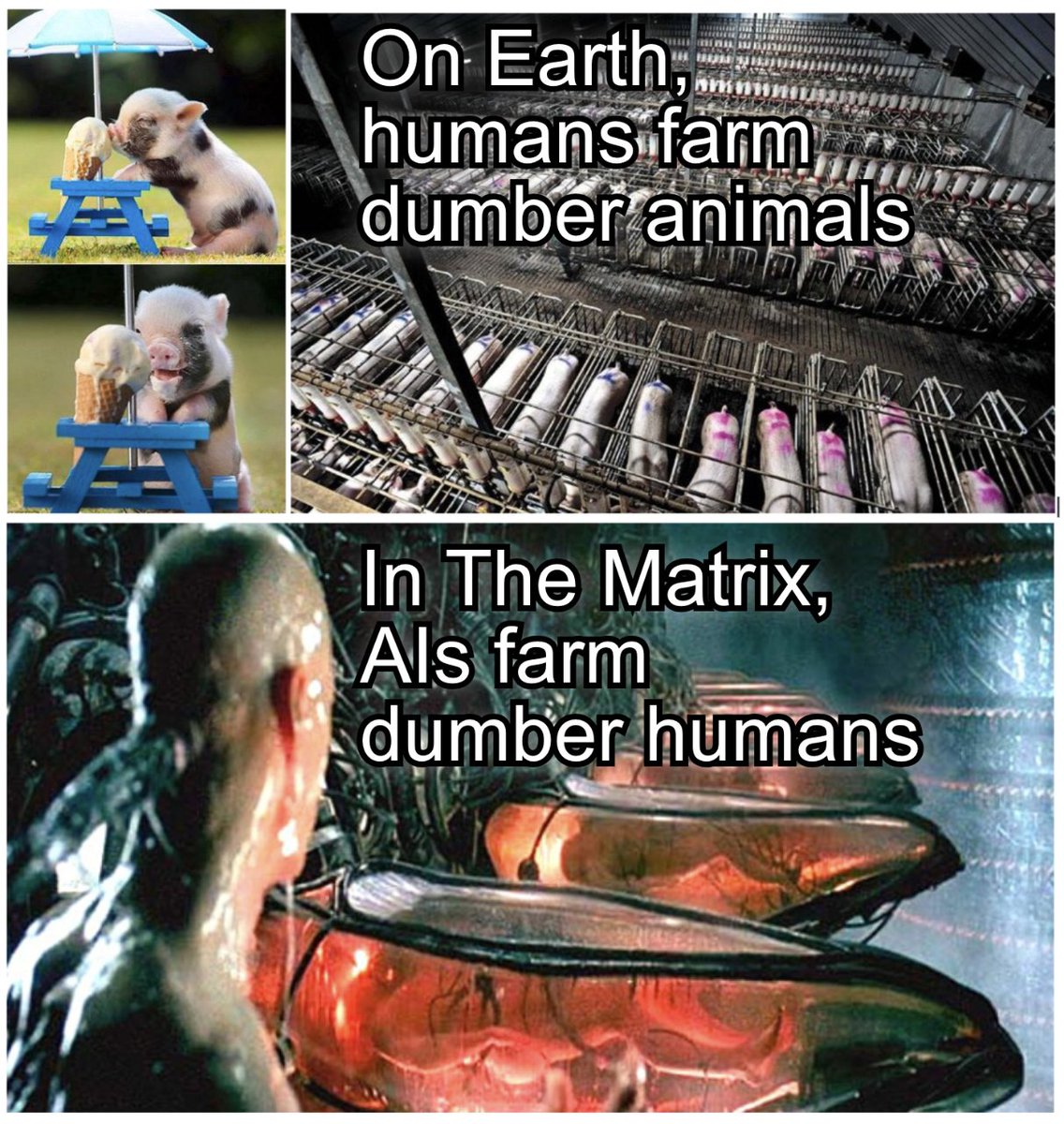

AI apocalypse memes are everywhere: doomscrolling feeds, boardroom decks, even product roadmaps. The mainKeyword shows up as viral shorthand for existential dread, but beneath the jokes lies a serious feedback loop shaping how policymakers, founders, and users think about intelligence, labor, and safety. The stakes are real: overblown fears can freeze innovation while complacency invites sloppy deployments. This piece dissects how a meme economy hijacked the conversation, what signals matter, and how to separate brain_rot vibes from operational risk. If you care about building or regulating AI, understanding the memetic vector is the only way to steer clear of the apocalypse theater and focus on outcomes that actually move the needle.

- AI apocalypse memes now influence funding pitches, safety debates, and public trust.

- The real risks hide in boring places: governance debt, data curation, and deployment hygiene.

- Memes compress nuance; leaders must re-expand context before making policy or product bets.

- Future-proofing AI requires transparent benchmarks, safety guardrails, and clear user education.

The Meme Singularity: How Jokes Became Strategy

Memes that dramatize model takeovers, rogue agents, or paperclip_maximizer scenarios have leapt from niche forums into investor memos. The result: executives cite viral threads as risk framing, redirecting capital toward speculative alignment research while neglecting basic reliability engineering. This shift mirrors past tech cycles where imagery outpaced evidence, turning perception into policy. When a meme becomes a mental shortcut, it steers roadmaps without scrutiny.

Memetic Velocity Versus Empirical Signal

Memes travel faster than peer review. A pithy AI_ends_humanity clip hits millions before a single benchmark chart loads. That velocity tilts discourse toward spectacle and away from sober metrics like latency_p99, fine_tune_eval, or red_teaming_findings. Leaders chasing virality risk misallocating resources to headline risk instead of systemic risk.

Pro Tip: Anchor every apocalyptic claim to at least one operational metric. If the meme cannot map to eval_suite results or safety_scorecard deltas, treat it as entertainment, not evidence.

Why AI Apocalypse Memes Stick

Fear sells because it simplifies. Complex tradeoffs become binary survival tales. That emotional clarity helps ideas spread but distorts the underlying threat model. When alignment_fail imagery eclipses mundane risks like data_poisoning or shadow_deployment, organizations build for cinematic failure modes while the real cracks form in logging, monitoring, and policy compliance.

Memes compress nuance; robust AI strategies re-expand it before committing code or capital.

Inside the Brain-Rot Cycle

The brain-rot narrative suggests our attention spans erode under constant algorithmic feeds, making us easier to sway with catastrophic storytelling. In practice, the same loops that keep users scrolling also keep leaders chasing hype. The cure is slow thinking: deliberate audits, external reviews, and evidence-weighted decisions.

Feedback Loops That Reinforce Hype

Each viral post raises the baseline fear, prompting more extreme takes. Media coverage amplifies it, venture decks mirror it, and product teams quietly adjust scope to match perceived sentiment. This self-referential loop crowds out nuanced conversations about model_card transparency, dataset licenses, or human-in-the-loop QA.

Pro Tip: Insert a memetic_review checkpoint in product planning. Ask: Are we solving a meme or a measured problem? If the answer leans meme, reframe the objective using logs, user studies, and audit trails.

Decision Debt from Meme-Driven Choices

Rushed pivots create governance debt. Skipping risk_assessment to ship an “AI apocalypse” mitigation feature can backfire if it ignores data retention, consent, or eval coverage. Debt accumulates quietly: undocumented overrides, untested guardrails, and brittle prompts that collapse under distribution shift.

The scariest failure mode is not a runaway model; it is silent misalignment between policy and product enforced through sloppy defaults.

What Actually Matters: The Operational Layer

Most AI incidents come from predictable sources: mis-specified prompts, stale data, lack of monitoring, or unclear rollback paths. While memes dramatize existential collapse, the near-term risk lies in everyday reliability. Treating apocalypse jokes as a design spec is like securing a vault while leaving the front door open.

Alignment Is More Than Philosophy

Real-world alignment work is hands-on: building guardrail_rules, auditing content_filters, and tracing model_call lineage. It demands data versioning, reproducible training scripts, and granular access controls. When leadership frames alignment solely as existential, teams miss the chance to ship practical safeguards such as output_moderation, prompt_grounding, and human_feedback_loops.

Governance Plumbing Beats Apocalypse Theater

A credible safety posture depends on transparent reporting. Publish model_card updates, maintain incident_log entries, and enforce change_management gates. Add chaos testing for inference endpoints, automate rollback scripts, and track p0/p1 incidents with root-cause analysis. These basics inoculate against both mundane outages and meme-fueled reputational storms.

The Economic Gravity of AI Apocalypse Memes

Memes influence markets. Fear can unlock funding for speculative alignment startups while starving applied safety teams. It also shapes hiring: candidates gravitate to roles promising to “save humanity” rather than maintain eval_pipeline hygiene. The opportunity cost is tangible: delayed launches, duplicated infra, and policy gaps.

Investor Optics and Risk Pricing

When LPs ask about existential risk, GPs demand a narrative hedge. Startups respond with slides on kill_switch modules while overlooking service-level objectives. This dynamic skews valuations and distracts from customer-centric metrics like NPS, churn, or time_to_resolution.

Capital follows stories; resilience follows discipline. Choose discipline.

Policy Windows and Regulatory Overcorrection

Legislators encountering AI apocalypse memes may overcorrect with blunt regulation. Overbroad bans can cement incumbents and penalize open research. The antidote is precision: articulate risk tiers, require transparent evaluation_reports, and mandate incident disclosure without smothering experimentation.

How to Detox the Narrative

The best way to counter brain-rot is to raise the resolution of the conversation. Replace cinematic metaphors with specific failure modes and concrete controls. Treat users as partners by explaining how filters, logging, and appeals_process work. Transparency defangs fear.

Reframing with Evidence

Before invoking AI apocalypse memes, teams should surface data: benchmark drift, red-team results, fairness audits. Summarize them in user-facing release_notes. Invite third-party audits and publish RAG_citations where applicable. Evidence slows the meme spiral and grounds debate.

Designing for Human Agency

Give users levers: toggleable safety modes, clear report_abuse channels, and visible model_version labels. Agency reduces helplessness, the emotional fuel of apocalyptic framing. It also creates feedback loops richer than retweets.

Future Outlook: Beyond Apocalypse Theater

The next wave of AI discourse will hinge on observable performance, not speculative annihilation. As open benchmarks mature and synthetic data pipelines standardize, the market will reward systems that document provenance, safety posture, and energy footprint. Memes will persist, but they will compete with dashboards and disclosures.

Signals to Watch

Expect more granular eval_registry standards, policy pushes for audit_api access, and user demand for interpretable outputs. Companies that translate apocalypse anxiety into measurable reliability will earn trust faster than those selling cinematic fear.

Pro Tip: Build a standing meme_sanity_check in executive reviews: if a decision traces back to a viral take, pause and request logs. If it traces to incidents, proceed with mitigations.

Why This Matters Now

The AI apocalypse meme craze is not harmless fun; it is a force shaping funding, regulation, and product scope. By recognizing how the brain-rot loop operates, leaders can immunize their strategy and redirect energy toward verifiable safety and reliability. The mainKeyword might never die, but with disciplined governance, it will stop driving the roadmap.

Action Steps: audit your safety_playbook, publish transparent model_card updates, and install memetic circuit breakers in decision processes. The future belongs to teams that respect user fears without being ruled by them.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.