Nvidia Redraws the AI Power Map

Nvidia Redraws the AI Power Map

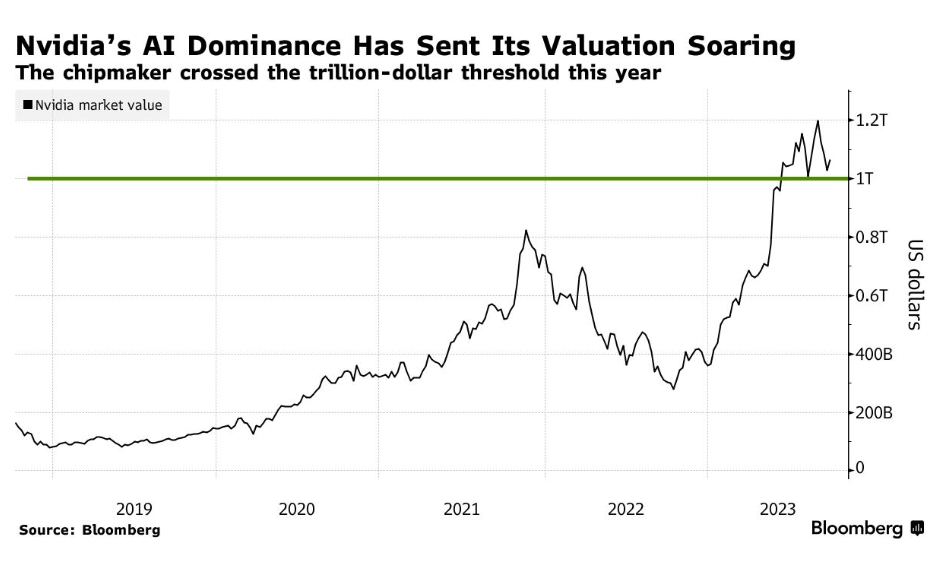

The AI boom has a gatekeeper, and right now it looks a lot like Nvidia. As demand for advanced chips surges, the company is no longer just a supplier tucked behind the scenes of gaming PCs and data centers. It has become one of the most consequential businesses in the global economy, shaping how fast AI can scale, who gets access to cutting-edge compute, and how much that future will cost. That matters far beyond Silicon Valley. If you are a business leader, investor, policymaker, or even just a user of AI products, the Nvidia story is really about leverage: who has it, who needs it, and what happens when one company sits at the center of a technological arms race.

- Nvidia has moved from chipmaker to infrastructure kingmaker in the AI economy.

- Its dominance is driven by more than hardware: software, developer lock-in, and supply chain scale matter just as much.

- Rivals are catching up, but replacing

Nvidiainside AI data centers is far harder than buying an alternative chip. - Governments and enterprises now have to treat AI compute as a strategic resource, not just a procurement line item.

- The next phase of the market will be defined by pricing pressure, geopolitics, and whether customers can escape dependence on one ecosystem.

Why Nvidia dominance matters now

The immediate story is simple: the generative AI frenzy created a ravenous appetite for high-performance chips, and Nvidia was ready before everyone else. But the deeper story is that AI infrastructure rewards whoever builds the most complete stack. A company can have powerful silicon, but if it lacks mature software tools, broad cloud support, and a developer base trained on its platform, adoption slows fast.

That is where Nvidia has been especially ruthless and effective. Its chips are powerful, yes, but the company also spent years turning its ecosystem into the default language of AI training and inference. For customers racing to deploy models, that default matters. Enterprises do not just buy chips. They buy time, predictability, support, and a lower risk of failure.

The real moat is not only the processor. It is the ecosystem around the processor.

This is why Nvidia keeps showing up at the center of discussions about cloud growth, AI startup valuations, and national technology strategy. When one company controls a critical choke point, every other player has to adapt around it.

How Nvidia built more than a chip business

The hardware lead became a platform advantage

At the heart of the company’s rise is a practical truth: AI workloads are brutally demanding. Training large models requires enormous parallel processing power, high-bandwidth memory, advanced networking, and efficient orchestration across massive clusters. Nvidia turned that complexity into a product strategy.

Its GPUs became the preferred engines for modern machine learning because they were good at the right task at the right moment. Then the company kept climbing the stack. Networking, interconnects, data center systems, and prebuilt AI infrastructure all helped make it harder for customers to mix and match alternatives.

That is the part of the story many casual observers miss. Buying a rival chip is one thing. Rebuilding a validated production environment around that rival is something else entirely.

CUDA is still the hidden weapon

If you want to understand why Nvidia remains so difficult to dislodge, look at CUDA. The software platform has become deeply embedded in AI development workflows, optimization libraries, research pipelines, and enterprise deployments. Developers know it, cloud providers support it, and AI teams often build around it by default.

That creates a form of lock-in that is more durable than raw benchmark performance. A competing chip can claim better economics or comparable speed, but if the migration path is painful, most customers stay where they are. In infrastructure, friction is destiny.

Pro tip: When evaluating AI hardware competition, watch for software portability and deployment tooling, not just chip performance slides. The switching cost often decides the winner.

Nvidia and the economics of AI scarcity

The company’s power has also been amplified by scarcity. During key phases of the AI buildout, demand for advanced accelerators overwhelmed supply. That scarcity raised prices, extended lead times, and made access to top-tier chips a competitive advantage in itself.

For hyperscalers, that meant spending aggressively to secure future capacity. For startups, it often meant renting compute from cloud providers at premium rates or accepting that model training schedules would be constrained by infrastructure bottlenecks. For everyone else, it meant the cost of participation in advanced AI remained stubbornly high.

This is one reason the market started treating Nvidia less like a conventional semiconductor company and more like a toll collector on the AI highway. Every major AI deployment, directly or indirectly, seemed to run through its technology.

When compute is scarce, the supplier does not just sell parts. It shapes strategy.

That dynamic has huge implications for AI economics. If the cost of chips, power, networking, and cooling stays elevated, the dream of cheap, ubiquitous AI becomes harder to realize. Enterprises may keep experimenting with AI, but they will demand clearer returns on investment before scaling further.

What rivals need to prove

It is not enough to be cheaper

AMD, Intel, custom silicon teams, and cloud providers all see the opportunity. They know the current market structure leaves room for alternatives, especially if customers want lower costs or more control. But cheaper silicon alone will not be enough.

To really threaten Nvidia, competitors need to solve several problems at once:

- Performance consistency: strong real-world results across training and inference workloads.

- Software maturity: tools that reduce or eliminate migration headaches.

- Cloud availability: broad support from major providers.

- Operational trust: reliable supply, support, and clear roadmaps.

That is a daunting checklist. It helps explain why so many customers publicly talk about diversification while continuing to buy more Nvidia systems.

Custom chips could change the balance

The most serious long-term challenge may come from hyperscalers building their own silicon. If large cloud platforms can design accelerators tailored to their internal workloads, they can cut costs, reduce dependence on external vendors, and optimize tightly for their own services.

Still, custom silicon is not a universal solution. Designing chips is expensive. Supporting them at scale is difficult. And many enterprise customers prefer standard platforms with proven tooling over highly specialized alternatives. That means custom chips can nibble at the edges of Nvidia‘s dominance without immediately collapsing it.

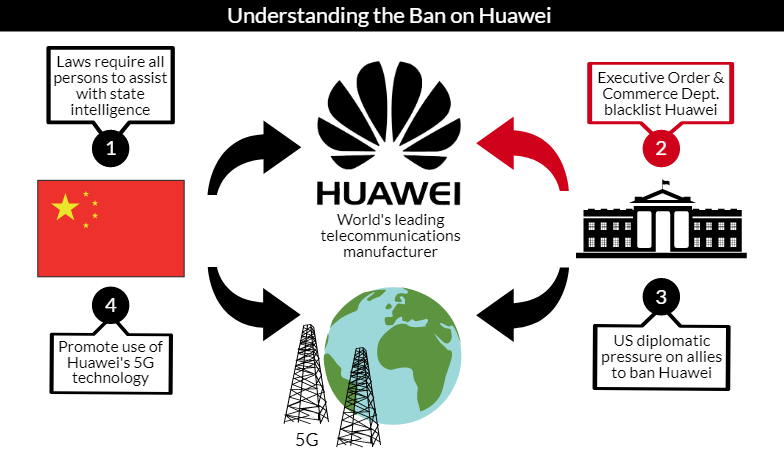

Nvidia in the geopolitical crossfire

AI chips are no longer just a business story. They are a geopolitical one. Governments increasingly view advanced semiconductors as strategic assets tied to economic power, military capability, and national security. Export controls, manufacturing concentration, and cross-border technology restrictions all now shape the AI hardware market.

That puts Nvidia in a uniquely sensitive position. It benefits from global demand, but it also operates inside a framework where governments may limit where certain high-end products can go and how they can be used. The company is big enough to influence national conversations, but not big enough to ignore them.

For businesses, this means AI procurement is becoming more political. Supply planning, data residency, and vendor exposure are no longer niche concerns. They are board-level issues.

Why this matters: the future of AI may be determined as much by trade policy and fabrication capacity as by breakthrough model architecture.

Can customers escape the Nvidia trap

The word trap may sound dramatic, but it captures a real tension. Customers want the best tools, but they also want bargaining power. The more dependent they become on a single vendor’s hardware and software ecosystem, the harder it gets to negotiate pricing, timelines, and long-term strategy.

There are ways to reduce that risk:

- Design AI workflows with portability in mind.

- Test inference on multiple hardware backends.

- Avoid overcommitting to one optimization path too early.

- Push vendors for open standards and transparent roadmaps.

Even so, most organizations cannot optimize for independence alone. They have to ship products, train models, and meet deadlines. If Nvidia remains the fastest path to execution, many buyers will accept the tradeoff.

What happens next for Nvidia

Growth is no longer the only question

The next chapter is less about whether Nvidia can keep growing and more about whether it can convert extraordinary demand into durable strategic control without triggering sharper resistance. That resistance can come from customers, competitors, regulators, or even macroeconomic shifts that cool the current AI spending spree.

If enterprise AI adoption broadens, Nvidia could deepen its position as the default compute layer for the industry. If budgets tighten and customers focus more on efficient inference than giant model training runs, pricing pressure may increase and specialized alternatives could gain ground.

There is also the question of valuation and expectations. Once a company becomes synonymous with the hottest market in tech, the burden changes. It no longer needs to be merely excellent. It has to keep proving that its dominance is sustainable.

The hardest part of leading the AI boom is staying indispensable after the boom matures.

The smarter reading of the market

It is easy to frame the story as simple hype or simple inevitability. Neither is quite right. Nvidia earned this position through technical execution, ecosystem discipline, and uncanny timing. But its current power also reflects a market that moved so quickly it had little patience for second-best options.

That can change. Infrastructure markets eventually get more contested. Standards improve. Buyers become savvier. Margins attract challengers. The question is not whether competition will appear. It is whether that competition will arrive before Nvidia hardens its role as the indispensable operating layer for AI itself.

Why Nvidia dominance should command attention

This is bigger than one earnings cycle or one product generation. Nvidia dominance reveals how modern tech power works: not just through invention, but through control of ecosystems, supply chains, and the practical tools developers rely on every day. That is why this moment deserves more scrutiny than celebration.

Yes, Nvidia is enabling remarkable progress in AI. But it is also concentrating influence over a foundational layer of the next computing era. For enterprises, that means cost and dependency questions. For governments, it means strategic vulnerability. For rivals, it means the bar is far higher than building a fast chip.

The AI race is often described as a battle over models. Increasingly, it looks like a battle over compute. And in that fight, Nvidia is not merely participating. It is setting the terms.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.