Why AI Distrust Could Cost America

Why AI Distrust Could Cost America

American AI distrust is no longer a mood or a polling quirk – it is becoming a strategic liability. While the United States debates whether artificial intelligence is dangerous, overhyped, or socially corrosive, China is pushing ahead with a far more coordinated embrace of the technology. That contrast matters. AI is not just another consumer trend or venture capital obsession. It is quickly becoming core infrastructure for productivity, defense, education, logistics, healthcare, and state power. If one country treats AI as a threat to contain while another treats it as a capability to scale, the long-term consequences will not stay confined to Silicon Valley. They will shape economic growth, labor markets, geopolitical leverage, and the next generation of global platforms.

- American AI distrust could slow adoption at the exact moment AI is becoming strategic infrastructure.

- China’s approach combines public acceptance, state coordination, and industrial policy.

- The real risk is not skepticism itself, but failing to separate legitimate caution from reflexive rejection.

- Businesses, policymakers, and workers need a practical framework for responsible AI adoption.

American AI distrust is becoming a competitive problem

The core tension is simple: Americans increasingly view AI through the lens of fear, while China is more willing to frame it as progress. That divergence is not just cultural. It affects investment decisions, regulation, talent development, public-private partnerships, and how quickly organizations deploy tools that improve efficiency.

Some skepticism is healthy. Generative AI systems hallucinate. Automated decision tools can reinforce bias. Data privacy concerns are real. Workers are right to worry about displacement. But a country can acknowledge those risks and still move aggressively to build capacity. The problem starts when caution hardens into paralysis.

When a foundational technology is treated mainly as a social threat rather than a national capability, competitors gain time, scale, and experience.

This is where the American conversation often breaks down. Public debate tends to swing between utopian salesmanship and apocalyptic panic. Neither is useful. The more important question is whether the US can build trust fast enough to deploy AI where it creates genuine value.

Why China’s AI embrace changes the stakes

China’s support for AI is not simply about optimism. It is strategic. The country has spent years integrating technology ambitions with industrial policy, education goals, infrastructure planning, and national competitiveness. That does not mean China has solved every problem around safety, ethics, or misinformation. It means it is more likely to absorb those problems while continuing to scale.

That matters because AI rewards iteration. The more a country deploys systems across manufacturing, transportation, public services, and research environments, the faster it learns what works. Usage creates feedback loops. Feedback loops improve products. Improved products attract more users and more capital. Over time, that compounds.

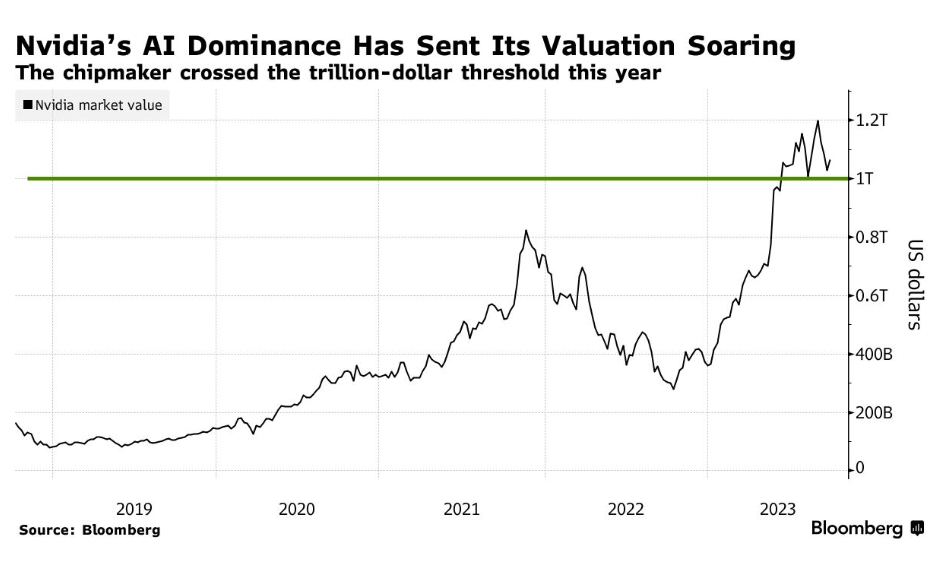

The US still leads in key areas, especially frontier model development, advanced chip design, startup culture, and top-tier research institutions. But leadership in invention does not automatically translate into leadership in adoption. History is full of technologies pioneered in one place and industrialized at greater scale somewhere else.

Scale matters more than hype

One reason this debate feels muddled is that many people still think of AI as a chatbot phenomenon. That misses the bigger picture. The real power of AI sits behind the scenes: supply chain optimization, fraud detection, drug discovery, customer service automation, predictive maintenance, precision manufacturing, and decision support.

If China accelerates adoption across those sectors while the US remains culturally ambivalent, the gap will show up not just in flashy demos but in industrial performance. Faster production cycles, lower operating costs, stronger logistics, and more adaptive public systems all create national advantages.

Public sentiment can become industrial policy by accident

Governments write formal policy, but public distrust often writes informal policy. Schools ban tools. Companies delay deployments. regulators respond to worst-case headlines. Workers resist experimentation because leadership has failed to explain the upside. The result is a soft freeze that slows progress even without explicit prohibition.

That kind of drag is easy to underestimate. No single decision kills momentum. Instead, momentum leaks away through hesitation.

The case for skepticism is real – but incomplete

It would be a mistake to dismiss public concern. People have valid reasons to distrust AI. Tech platforms have burned credibility for years with overpromising, underexplaining, and externalizing social costs. Consumers have seen algorithms amplify junk, surveillance, and manipulation. Workers hear efficiency rhetoric and understandably translate it as layoffs.

So yes, distrust has a rational foundation. But if the response is to avoid adoption altogether, the costs may be even higher. Countries that fail to build literacy around transformative technologies tend to become rule-takers rather than rule-makers. They consume systems shaped elsewhere, under standards they did not define.

The smartest posture is not blind faith or blanket fear. It is disciplined adoption with clear safeguards.

That distinction matters. A society can demand transparency, testing, auditability, and labor protections while still treating AI as an engine of national renewal.

How America can respond to AI distrust without falling behind

If the US wants to avoid turning American AI distrust into a self-inflicted handicap, it needs a more credible playbook. That starts with accepting that trust is built operationally, not rhetorically.

1. Focus on useful AI, not theatrical AI

The public is more likely to trust systems that solve visible problems. A hospital using AI to reduce diagnostic delays. A manufacturer using machine learning for predictive maintenance. A school system using carefully governed tools to support tutoring rather than replace teaching. Practical wins change perception faster than abstract promises.

2. Build guardrails into deployment

Trust improves when people understand the rules. Organizations should define where AI can be used, where human review is mandatory, and how outputs are tested.

- Human oversight: Require review for high-impact decisions.

- Data governance: Limit sensitive data exposure and document access controls.

- Model evaluation: Test for accuracy, bias, and failure cases before launch.

- Worker communication: Explain whether tools augment roles, automate tasks, or change job design.

Even simple internal standards can help. A company-level policy might look like if risk_level == "high": require_human_review = true. That is not just compliance theater. It signals that adoption is being managed deliberately.

3. Treat AI literacy as economic infrastructure

The distrust problem is partly an education problem. People tend to fear what feels opaque and uncontrollable. National competitiveness will depend on a workforce that understands how AI tools function, where they fail, and how to work alongside them.

That means expanding technical training, yes, but also basic operational literacy for non-engineers. Managers, teachers, nurses, warehouse workers, civil servants, and small business owners all need practical frameworks. What should they automate? What should they never automate? How do they verify outputs? What data should never be entered into a public model?

These are not niche questions anymore. They are workforce questions.

Why this matters for business, labor, and national power

The American AI distrust debate is often framed as culture war material, but the implications are concrete. For business, slower adoption means lower productivity growth and weaker competitiveness. For labor, it means workers may be less prepared for inevitable workflow changes. For government, it means reduced capacity to shape standards in a technology that will influence everything from intelligence analysis to public service delivery.

There is also a timing issue. AI adoption is not a one-time switch. The organizations that begin now will build the best data pipelines, governance habits, and operational instincts. Late movers will not just be behind on tools. They will be behind on institutional learning.

The productivity angle is easy to miss

Generative AI has attracted most of the headlines, but the broader story is productivity. Small gains across millions of workflows can reshape an economy. Faster document processing. Better forecasting. Lower customer support costs. Improved software development speed. More efficient procurement. Individually, these improvements can look incremental. At scale, they become transformative.

That is why broad social distrust matters so much. If the public climate discourages experimentation, those gains arrive later or go elsewhere.

National security is part of the equation too

No serious country can treat advanced AI as purely a consumer issue. It has implications for cyber defense, intelligence fusion, autonomous systems, logistics, and battlefield decision support. Even if citizens mainly encounter AI through chatbots and image generators, states will encounter it through strategic competition.

That does not justify careless acceleration. It does mean that falling behind has consequences beyond market share.

The smarter path is confidence, not complacency

America does not need propaganda about AI. It needs competence. Public trust will not be restored by telling people everything is fine. It will be restored when institutions show they can deploy powerful systems responsibly, explain the tradeoffs clearly, and produce outcomes that feel tangible.

The good news is that the US still has enormous advantages: top research universities, deep capital markets, world-class engineers, and leading platform companies. The bad news is that advantages erode when a society cannot align around execution.

The future of AI leadership will be decided less by who invents first and more by who learns to adopt at scale without losing public legitimacy.

That is the real challenge hidden inside this debate. Distrust can be a safeguard, but it can also become a brake. If the United States confuses skepticism with strategy, it may discover too late that the real risk was not embracing AI too quickly – it was refusing to build trust quickly enough to compete.

Pro Tip: For business leaders, the best near-term move is not chasing every new model release. It is identifying one repeatable workflow, applying governed AI tools, measuring results, and building internal confidence from there. Trust grows faster when it is attached to evidence.

The information provided in this article is for general informational purposes only. While we strive for accuracy, we make no guarantees about the completeness or reliability of the content. Always verify important information through official or multiple sources before making decisions.